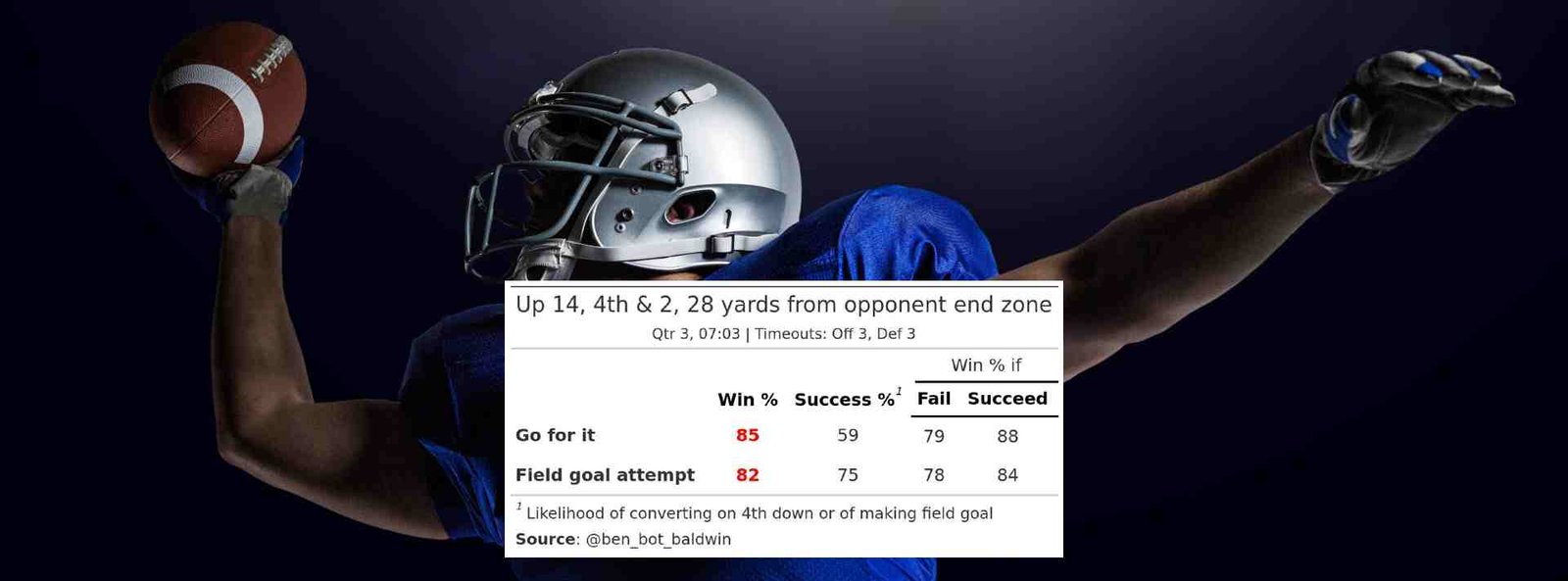

In just a week, the San Francisco 49ers and the Kansas City Chiefs will be battling it out in the 58th Super Bowl in Las Vegas. It’s a thrilling matchup that, however, almost saw the Detroit Lions in their first-ever Super Bowl instead of the 49ers. In the NFC Championship game, the Lions were leading the 49ers by 14 points midway through the third quarter, ready to extend their lead with a field goal. However, Coach Dan Campbell’s decision to go for the fourth down backfired, costing the Lions their shot at reaching the Super Bowl for the first time.

Critics and the media were quick to question Campbell’s call, but in his defense, the decision was guided by modern-day analytics. A data driven model suggested that going for the fourth down increased the team’s probability of winning compared to attempting a field goal.

In today’s world, decision-making, whether in sports or business, often relies on data-driven models. The Federal Reserve cites data as a key factor in its interest rate decisions, and CEOs frequently use data-driven algorithms for everyday business choices. It’s argued that data-driven decision-making is the most objective approach to making the right calls.

However, recent incidents have shed light on the pitfalls of relying solely on data-driven algorithms. In 2021, Zillow, a real estate marketplace, faced significant setbacks due to flawed home value projections generated by its data-driven algorithm “Zestimate.” This miscalculation led to a shutdown of Zillow Offers operations, resulting in a 25% workforce reduction and substantial financial losses.

Similarly, in 2019, a health care risk-prediction algorithm used on millions of people in the U.S. exhibited racial bias, as it failed to accurately assess the needs of severely ill Black patients. This is just one example of the many data science and analytics projects that falter due to issues such as insufficient data, bias, and ethical concerns.

The stakes are even higher when it comes to decisions based on AI models inherently driven by data. Amazon’s attempt at AI-powered recruiting software in 2014 is a notable example. The system, biased toward male candidates, reflected the gender imbalance in its training data, penalizing phrases like “women’s” and downgrading candidates from all-women colleges.

While AI-powered, data-driven decision-making has proven successful in numerous situations, it remains a double-edged sword. Context and human judgment play a crucial role in avoiding pitfalls. The Detroit Lions’ heart-wrenching loss in the Divisional Championship game serves as a poignant reminder of the challenges and complexities associated with relying solely on data-driven decisions. As we navigate this thin line, it’s essential to strike a balance between leveraging the power of data and incorporating human wisdom.

More Blogs From Rathin Sinha

Read Company Blog